It’s Time to Verify the Internet

Twitter’s new Birdwatch feature is a good step, but more needs to be done.

Harmful language, violent rhetoric, and misinformation are all too common on social media websites. A 2018 study by the Anti-Defamation League asserted 53% of Americans experienced online harassment or hate speech. As violent rhetoric fueled the insurrection at the United States Capitol earlier this month, that percentage is likely higher today.

Social media companies and internet providers have been slow to implement restrictions, primarily due to Section 230 of the 1996 Communications Decency Act. This law shields tech companies and internet providers from liability resulting from user-generated content. Essentially, Twitter is not responsible for what users post. As a result, Twitter and its competitors take a slow and systematic approach to addressing hate and harassment accusations on their platforms. This inaction, however, has allowed harmful content to flourish online.

While Section 230 provides liability shields, it does not provide tech companies with a free pass. Social networking sites can be held liable if they knowingly permit illegal content. To comply with this stipulation of the law, companies independently moderate content across their sites. Each network sets its own rules and enforcement tactics and employs a team of moderators to ensure illegal and potentially harmful content is removed.

Crowdsourced Content Moderation

This week, Twitter announced new crowdsourced content moderation tools called Birdwatch. Users now can flag and leave notes on factually dubious or potentially harmful tweets. These notes are showed to other users, announcing the content is potentially inaccurate. Birdwatch is intended to help content moderators act on tweets that violate Twitter’s rules or distribute illegal content. Most social networks have a similar program for users to report inappropriate content.

In early 2018, a group of internet advocates and organizations formed The Santa Clara Principles, a set of baseline transparency standards for content moderation. As a result, many social media companies share data on the content they remove due to rules violations. These transparency principles compel platforms to share data on the amount of content removed.

In the first half of 2020, Twitter removed 1.9 million tweets for violating company rules. Facebook moderators removed 107.5 million posts in Q1 2020. Throughout 2019, Reddit deleted 137.2 million posts or 4.7% of all content posted to the site.

While not a perfect solution, crowdsourced content moderation is a step toward ensuring a safe and factually accurate internet. However, additional steps must be taken to curtail misinformation, violent rhetoric, and illegal content online. One such step is account verification.

Verified Accounts

First implemented by Twitter in 2009, most social media companies have a process for verifying some users. Verified accounts are identified with a badge of authenticity — the blue checkmark. Twitter’s verification system is currently suspended while rules and processes are reevaluated. In draft rules released in December, Twitter’s new verification process launching this year will focus on “authentic, notable, and active” accounts.

Instead of ambiguous definitions of who is “notable” and who is not, Twitter should provide all users access to verification. Then, verified users should have tools to prevent unverified content from appearing within their feed. Yes, this is restrictive and would relegate unverified users to a walled-off portion of the internet. Still, it would allow a safer and much more easily moderated internet for those opting into verification.

A 2012 study published in Computers In Human Behavior found “anonymity, invisibility, and the lack of eye-contact” fuel toxic online interactions. Another study, published in the 2019 Aslib Journal of Information Management, determined users most likely to post hateful content online largely remained anonymous. Verifying accounts and reducing anonymity will decrease dangerous and abusive internet behaviors.

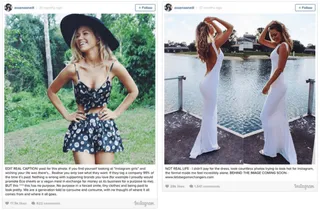

In December, a New York Times opinion piece alleged Pornhub, the internet’s largest user-generated porn site, failed to adequately moderate content. The allegations of child sex-trafficking and abuse led VISA and MasterCard to consider stoping all payments processed on Pornhub. Facing mounting backlash, Pornhub deleted every video uploaded by an unverified account. As a result, 8.8 million videos, or 65% of Pornhub’s entire content library, were erased from the site.

Covering the Pornhub action, Vice News spoke with many verified performers who applauded the decision as a means to reduce abuse and piracy among the unverified accounts. While it is unfair to assume all 8.8 million videos Pornhub deleted potentially contained illegal content, it is an example of the power of verifying user accounts.

Verification for all users is not a radical or new concept. The secure messaging service Keybase, founded in 2014 and now owned by Zoom, provides all users with public verification. Users post a publicly available tweet with a randomly generated code Keybase uses to verify the account’s authenticity. The system then links the verified account back to the user’s Keybase encryption key. This way, Keybase users can ensure they communicate with the correct person both on and off the platform. Twitter, and other major social networks, should follow Keybase’s lead and provide verification options to all users, not just a select few.

In November, Medium founder Ev Williams pondered verifying users and invited public responses. “Though the dual-class aspect could be debated,” Williams said in regards to verified and unverified accounts, “I do still think there’s value in verification in building trust into a platform and have thought about doing something similar on Medium.”

Medium’s Partner Program requires users seeking payment for their content to provide a W9 with a Social Security Number or Tax ID. This information should provide fuel for easy verification of Medium accounts. Facebook and Instagram have a verification tool requiring legal documents and utility bills to verify businesses, though users must first make it through the automated qualification checks before submitting. These barriers should not exist for users seeking verification and access to verified-only feeds.

Verification of hundreds of millions of users is a massive undertaking. Still, it would be a large and productive step in reducing extremist and abusive behaviors online. Granted, verification alone is not a magic wand erasing all violent and false content.

Earlier this year, Twitter removed former-president Donald Trump’s verified account for continued violation of company rules. While verification did not inhibit Trump’s online conduct, removing his account reduced election misinformation by 73% across Twitter’s platform. Bots and unverified followers repeatedly amplified and promoted the former president’s content. Verification and the ability to automatically mute unverified users would also dramatically drop the spread of such harmful content.

Verification does not mean users must lose their anonymity. Twitter verifies countless brand, product, and corporate accounts. A similar process should be implemented to verify accounts for people still wishing to remain anonymous.

Verification is an option all social media companies should provide to end-users, regardless of their perceived notability. This action, combined with the ability to mute content from unverified users, is a huge step toward removing harmful, illegal, and abusive behavior from the internet.