ChatGPT, the Writer’s Strike, and the Future of Content Writing

This Week In Writing, we explore a middle-of-the-road approach to ChatGPT and the future of writing

The Writers Guild of America is on strike. I'm not fully versed on the provisions the Guild is striking over, but I know one of them is protections against the absolute tidal wave of AI. AI can't write with feeling or emotion (yet), but the WGA is wise to address the inevitable point where it will.

AI developments are coming fast and furious and are honestly hard to keep up with. While not a scientific poll, I'm using ChatGPT more often in everyday situations and know that my colleagues are, too. Today, I want to address the evolving state of AI writing tools, how to potentially use them responsibly, and what it means for the future of writers.

First, this is one of those situations where views change as the technology evolves. I've always looked at generative AI as a functional tool writers can use in their arsenal, but not something that should be used solely to create "content" (boy, do I dislike that word). I'm sticking with this stance, but the lines are starting to blur.

Currently, The Writing Cooperative rules state you must disclose the use of a generative AI. Not one submission in the last four weeks has done so. Does that mean no one used ChatGPT to build their submissions? Maybe. Though, I find it highly unlikely. Someone on one of the channels recently questioned the policy, asking what happens when all writing tools and apps have generative AI built in. It's a really good question.

Let's look at Grammarly for a minute. Technically, Grammarly has always been an AI company. Their fancy algorithm determines the most likely order of words, and it considers that arrangement grammatically correct. This description is an oversimplification, but it works. Now, Grammarly is going deeper into germinative AI with their Go product. Is it different from what they've been offering simply because it creates longer passages? I don't know. I don't, however, think writers need to disclose when they use Grammarly. So what does that say?

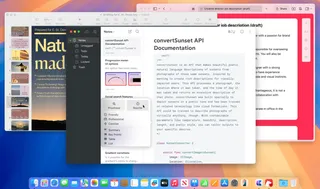

Lately, I've used ChatGPT for multiple projects in what I think are responsible ways. Here are a few ways I've used the tool recently:

- Revising existing passages by using the prompt "revise this:" and entering the paragraph;

- Asking for subheadings when my mind draws a blank by using the prompt "what is a one-word subheading for the following paragraph:" and entering the text;

- Taking my bullet point notes from client calls and asking ChatGPT to put them into complete sentences using the prompt "take the following notes and turn them into coherent sentences:" and then entering my bullet points.

Additionally, I've been working with the ChatGPT API to essentially build a fancy MadLib for my nonprofit clients. They'll eventually input a few pieces of information, which I'll use behind the scenes to combine into a text prompt run through the API. Ultimately, this will help clients better express their ideas and provide me with better information when working with them.

I'd like to think these are all responsible ways to use ChatGPT in my regular writing process. However, I'm torn with the dichotomy here. On one hand, as a writer myself, I want to advocate for others and their livelihoods. Writers should be paid for their work, and the WGA is right to ask for AI protections. On the other hand, I see how ChatGPT saves me time and enhances my existing workflow. Like Natalie Imbruglia, I'm torn.

I still don't think generative AI should be used to solely create entertainment. I don't want to read a personal essay penned by an AI, nor do I think the next blockbuster film should be written by an AI that knows what will likely make the most money. Will I notice these things when they happen? Maybe at first, but over time, probably not.

What do you think? Are you torn like I am, or are your views of ChatGPT and generative AI rock solid?

PS: Besides Grammarly and asking how to spell Imbruglia, everything in this article came out of my head.

Let's check in on Bluesky...

After talking about Bluesky and other "Twitter alternatives" last week, one of you kind people gave me an invite to the platform. My initial impression? It's chaotic.

I think Bluesky is intentionally inviting journalists, Twitter clout chasers, and meme lords in the first wave to try and garner some of this initial hype. It's why you keep seeing articles that say Bluesky is the next great thing despite only having roughly 60k users.

To me, Bluesky is the latest version of Clubhouse, the overhyped social platform that quickly rose to prominence and just as quickly died. It's invite-only and going after the "cool kids" from Twitter. Sure, it creates an initial buzz, but it didn't work out for Clubhouse. Maybe it will for Bluesky? I don't know.

Scrolling through Bluesky feels like the tech equivalent of the White House Correspondent’s Dinner mixed with dick jokes. It's a bunch of political and journalist nerds mixed with shitposters. That’s not inherently a bad thing, but is that what we want from social media? Or is that exactly what we want from social media?

Related Reads

Don’t Take My Word for It

• CraftThis Just In: Personalized recommendations are the new algorithms and the best way to build a true audience.

Why Make Anything if You Don’t Think It Will Be Great?

• CraftThis Week In Writing, we discuss greatness and how chasing it is a possible and noble goal.

Pay People Not Platforms

• PublishingThis Week In Writing, we look at why Substack’s collapse is actually a good thing for paid newsletters.

Let's Make the Internet Personal Again

• Featured PublishingThis Week In Writing, we look at the once-in-a-generation opportunity to create a new internet filled with fun and originality.

Raising the Bar at the Writing Cooperative

• EditorialThis Week In Writing, we look at changes to our publication standards and what they mean for you.

Advent, Waiting, and the Year of Transitions

• LifeThis Week In Writing, we look back at the year that was and determine what it means for the year to come.

Refilling the Creativity Tank

• LifeThis Week In Writing, we discuss what happens when creativity finds other outlets.

Celebrate Giving Tuesday

• LifeThis Week In Writing, we take a quick break from our regularly scheduled programming to celebrate nonprofit organizations.

It’s Time We Discuss Medium

• PublishingThis Week In Writing, we address the platform that has supported my writing for nearly a decade.

My First Year on Mastodon and the Future of Social Media

• Social MediaThis Week In Writing, we look back at how social media fractured and why it’s a good thing for us all.

The Economics of a Self-Hosted Newsletter

• PublishingThis Week In Writing, we talk about what happens when you eliminate platforms and go after it on your own.

Trick or Treat?

• CraftThis Week In Writing, we talk about pen names and whether they make sense for writers.

A New Era Begins

• PublishingThis Week In Writing, we explore the internet’s current metamorphosis and how you can be part of the revolution.

My History of Blogging

• PublishingThis Week In Writing, we celebrate the blog, explore the pendulum of online writing, and double down on quality.

An Update on Spam Submissions

• EditorialThis Week In Writing, we talk about spam submissions to The Writing Cooperative and look at some of your thoughts on being called AI.

Would You Want to Know if I Thought Your Writing Sounded Like AI

• EditorialThis Week In Writing, we talk about submissions to The Writing Cooperative and how to avoid false accusations.

How I Feel About Engagement Numbers

• PublishingThis Week In Writing, we discuss what engagement means and if I get discouraged by a perceived lack thereof. Plus, a look at the future (again).

My Writing Is About Building Community

• PublishingThis Week In Writing, we highlight some of the people I’ve met writing online and answer some of your questions.

It’s Time for a Fresh Start

• PublishingThis Week In Writing, we talk about new Apple products, home renovations, and changes to the newsletter.

Choose Your Own Design

• PublishingThis Week In Writing, we explore the wonderful world of blogs, where writers truly get creative.

Expanding Universes Make Better Stories

• CultureThis Week In Writing, we look at how worldbuilding is an essential part of epic storytelling.

Your Questions Answered

• EditorialThis Week In Writing, we recap a successful Medium Day and address some of the questions I didn’t have time to answer.

Saving Frequently Isn’t The Only Way To Backup Your Writing

• CraftThis Week In Writing, we take a hard lesson from the latest Twitter/X hijinks. Plus, we look at what “human writing” means.

MIT Says ChatGPT Improves Bad Writing, But At What Cost?

• AIThis Week In Writing, we explore how ChatGPT and Grammarly are making us all sound the same.

Do CTAs Even Work Anymore?

• PublishingThis Week In Writing, we explore the “necessary evil” of calls to action and ask if they are any better than tacky banner ads.

AI Is Now Everywhere

• AIThis Week In Writing, we talk about Google’s new AI plan, what it means for writers, and why resistance is futile.

My Ghostly Strategy: Avoid the Graveyard

• PublishingThis Week In Writing, we fully explore how I’m building Ghost into a self-hosted content hub and how you can too.

Another Platform Collapses

• Social MediaThis Week In Writing, we talk about Reddit and what it means for centralized communities moving forward.

The Problem With Creative Entitlement

• AIThis Week In Writing, we explore how AI tools amplify the sometimes problematic relationship between creator and consumer

My Return to Journaling Failed Miserably

• LifeThis Week In Writing, we talk about good intentions, rumored Apple products, and buying domain names

Let's Talk About Numbers

• PublishingThis Week In Writing, we talk about the importance of metrics and why I barely pay attention to mine.

ChatGPT, the Writer’s Strike, and the Future of Content Writing

• AIThis Week In Writing, we explore a middle-of-the-road approach to ChatGPT and the future of writing

BlueSky, Mastodon, and Notes; Oh, My!

• Social MediaThis Week In Writing, we talk about all the “Twitter Alternatives” and what makes the most sense for writers.

How Not To Approach an Editor

• EditorialPlus, here is an update on my participation in Medium’s Boost program and how not to approach an editor.

We Have to Talk About Platform Proliferation

• Social MediaThis Week In Writing, we ask why no platform is content on doing one thing well and instead want to do all things poorly.

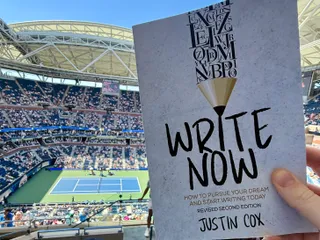

On Tennis and Writing Breaks

• LifeThis Week In Writing, I discuss my prolonged break from daily writing and follow up on last week’s Substack article.

We Have to Talk About Substack

• Featured PublishingThis Week In Writing, we talk about Diffusion of Innovation Theory and dying platforms.

I Don't Want to Talk to You on WhatsApp

• Social MediaThis Week In Writing, we address an impersonation issue, talk about scammers, and settle a Mastodon issue.

Stop Creating Quantity and Start Creating Quality

• EditorialThis Week In Writing, we discuss Medium’s new Boost program and why the vast majority of submissions lately have been atrocious.

1903 Days...

• BurnoutThis Week In Writing, we talk about writing streaks and why letting them go is okay.

You Have Questions, I May Have Answers

• CraftThis Week In Writing, we celebrate International Question Day by listening to Selena Gomez. What does that have in common? Keep reading!

AI Is Coming for Content Creators

• AIThis Week In Writing, we look at how AI is changing the content landscape and why that might be a good thing.

The Era of Centralized Platforms Is Over

• Featured PublishingThis Week In Writing, we discuss whether you should still own a website if you publish on Medium or Substack.

Are You Bound Up

• GrowthThis Week In Writing, we explore the concept of root-bound plants and how we can unknowingly follow the same path.

How Will History Remember Your Writing?

• CraftThis Week In Writing, we talk about the magic found in old books

How I Come Up With Writing Topics

• CultureThis Week In Writing, we explore topic generation while celebrating the best damn band in the land!

Introducing My Writing Community!

• EditorialA new way to connect with writers, discuss your interests, and receive feedback on your creative endeavors.

Are You Begging for Eyes in the Attention Economy

• Featured PublishingThis Week In Writing, we explore the internet’s move away from the attention economy and how writers can make the web more personal

Use Better Words to Be More Inclusive

• CraftThis Week In Writing, we talk about words to avoid in 2023, a special offer from a friend, and Medium joining Mastodon

What Biases Do You Bring to Your Projects

• CraftThis Week In Writing, we explore biases in our creative pursuits and how those biases can translate to AI-generated content.

Welcome to 2023. Now Take A Nap.

• CraftThis Week In Writing, we kick off a new year with a chat about goals, self-care, and naps.

I Created a New Language in 5th Grade

• LifeThis Week In Writing, we explore our digital legacies, discuss permanence, and close out the year with something new.

What’s the Last Book You Read

• Crafthttps://writingcooperative.com/whats-the-last-book-you-read-5265b44e180e

Success Comes to Those Who Work for It (Usually)

• CraftThis Week In Writing, we talk about success and perseverance through the lens of Simu Liu’s memoir. Oh, and AI writing, too.

Would You Burn Your Entire Archive

• CraftThis Week In Writing, we contemplate throwing out our leftovers and slimming down our digital presence.

Give Thanks to Our AI Overlords

• AIThis Week In Writing, we celebrate Thanksgiving and dive into the ever-improving AI-generated content.

Do You Procrastawrite

• CraftThis Week In Writing, we talk about procrastination and everything we do instead of writing.

Let’s Talk About Money

• FreelancingThis Week In Writing, we talk about earning money as a writer online and check in on NaNoWriMo.

Happy Author’s Day

• CraftThis Week In Writing, we kick off NaNoWriMo by celebrating all the author’s out there, whether published or not.

You’re Invited

• CraftThis Week In Writing, we prepare for NaNoWriMo with a special invitation, but first, we talk about She-Hulk!

Get Ready for NaNoWriMo

• CraftThis Week In Writing, we prepare for National Novel Writing Month (NaNoWriMo) with encouragement and a special offer.

How Do You Deliver Joy

• CraftThis Week In Writing, we discuss how to find your joy and how to spread joy to others.

Let’s Taco ‘Bout Giving the Reader More

• CraftThis Week In Writing, we celebrate National Taco Day by discussing ways to hook the reader and give them more to chew on.

Stop Making Excuses and Write

• CraftThis Week In Writing, we explore excuses we use to avoid writing and discuss methods to get out of our own way.

Are You Registered To Vote?

• LifeThis Week In Writing, we take a quick break from our regularly scheduled programming to encourage you to vote.

Did You Hug Your Boss Today?

• CraftThis Week In Writing, we explore inappropriate workplace dynamics and how that applies to writers.

How Do You Fight Procrastination?

• CraftThis Week In Writing, we explore the bane of most writers’ existence: procrastination. And, yes, it’s different from Writer’s Block.

What Word Makes You Cringe?

• CraftThis Week In Writing, we talk about cringe-worthy words and give a nod to puns, courtesy of Letterkenny.

This Is a Bit Revealing

• CraftThis Week In Writing, I reveal my inner nerd by sharing a personal project. Plus, we look at character creation.

The Stats I Track

• CraftThis Week In Writing, we explore which stats are necessary to track and which are safe to ignore.

Do You Color Outside the Lines?

• CraftThis Week In Writing, we explore taking our writing to places the reader doesn’t expect, like in the film Everything Everywhere All At Once.

Writing Is Exploring The Unknown

• CraftThis Week In Writing, we explore all-or-nothing thinking and learn how to live in the unknown within our work and ourselves.

When Writing Gets Controversial

• CraftThis Week In Writing, we explore the controversial origins of the bikini and how our writing can stoke controversy of its own.

Make Your Writing Space More Comfortable

• CraftThis Month In Writing, we explore simple ways to improve your writing space and the best advice published in June.

You Should Be on Twitter

• Social MediaThis Week In Writing, we explore how I’m shocked how many writers don’t take advantage of Twitter’s potential for writers.

Yoga for Writers

• CraftThis Week In Writing, we get out of the heat and explore yoga as a wellness strategy for creativity and mindfulness.

My Best Advice for Writers: LIVE!

• CraftNext week, I sit down with Sinem Günel to discuss writing, my book, and how you can stay encouraged even when life gets in the way.

You Need a Vacation

• CraftThis Week In Writing, we talk about the importance of pausing because you can’t write all the time.

I Wrote a Book!

• Featured PublishingThis Week In Writing, I announce my new book and provide an update on the Flash Fiction Writing Challenge!

Can You Write Pulp Fiction?

• CultureThis Week In Writing, we celebrate classic pulp fiction and invite you to explore potentially untapped forms of creativity.

Are You Turning Your Tassel Today?

• CraftThis Week In Writing, we celebrate National Graduation Tassel Day by exploring how we level up as writers.

Is Censorship Changing Your Language?

• Social MediaThis Week In Writing, we explore algorithm-driven ‘anglospeak’ and how words and their meanings change over time.

How You Defined Community

• EditorialThis Week In Writing, we reflect on the meaning of ‘community’ by reading through your responses.

Let’s Get Acquainted

• EditorialThis Month In Writing, I introduce myself and explore the future of The Writing Cooperative.

Are You Organized?

• CraftThis Week In Writing, we look at digital organization techniques to keep all of our drafts, research, and ideas safe.

Define: Community

• EditorialThis Week In Writing, we explore the meaning of community and how writers can better connect with each other.

When’s the Last Time You Visited the Library?

• CraftThis Week In Writing, we celebrate and honor every community’s pillar: the local library and library workers.

Does Your Writing Live Long and Prosper?

• CultureThis Week In Writing, we celebrate First Contact Day by exploring one of the best genres out there: sci-fi!

Do You Need a Day Off?

• CraftThis Week In Writing, we celebrate National Goof Off Day by exploring ways to take a break from writing (and yes, you can take a break).

Beware the Ides of March?

• CraftThis Week In Writing, we reclaim the Ides of March and turn it into a day to celebrate and lift writers worldwide.

Celebrate International Women’s Day With These Writers

• CraftThis Week In Writing, we celebrate International Women’s Day but uplifting and honoring our favorite women authors.

It’s Writing Pancake Day!

• CraftThis Week In Writing, we celebrate Fat Tuesday by cleaning out our pantry of darlings. What are you letting go of?

Let’s Talk About Tags

• PublishingThis Week In Writing, we explore Medium’s new design, selecting the best tags, and how to be humble as a writer.

When Did You Start Writing?

• CraftThis Week In Writing, we emphasize that age is but a number when it comes to writing. You can start at any point in your life.

Why Do You Write?

• CraftThis Week In Writing, we reflect on Dickinson and explore an essential question for every writer: why do you write?

Do you need to get up again?

• CraftThis Week In Writing, we celebrate National Get Up Day by refocusing on our priorities and building healthy writing habits.

Wondering Where Our Newsletter Went?

• EditorialThis Month In Writing, we’re brewing a mug of hot chocolate to enjoy while reading some of your best stories.

Feeling Pressure to Write?

• CultureThis Week In Writing, we explore the life and writing lessons about pressure and perfection from the movie Encanto.

How Big Is Your Diction?

• CraftThis Week In Writing, we break out the thesaurus to compare diction size and explore the power of unique words.

How Do You Write at Work?

• CraftThis Week In Writing, we celebrate Poetry at Work Day by exploring ways to write while on the job without getting distracted (or fired).

AI Is Not an All or Nothing Choice

• Featured AIThis Just In: AI use isn't a moral binary. There's a practical middle path for writers.

It’s Time to Rebel from Mass Market Social Media

• Featured Social MediaThis Just In: IT is the villain in Silo. We should learn from those in the Down Deep and rise up.

Platforms Are Getting Much Worse

• PublishingThis Just In: Platforms want us to know exactly who controls the internet. It’s not us, but it can be!

Where Have All the Cowboys Gone

• Social MediaThis Just In: social media is bleeding users, but where are they going?

Let's Make the Internet Personal Again

• Featured PublishingThis Week In Writing, we look at the once-in-a-generation opportunity to create a new internet filled with fun and originality.

My First Year on Mastodon and the Future of Social Media

• Social MediaThis Week In Writing, we look back at how social media fractured and why it’s a good thing for us all.

Saving Frequently Isn’t The Only Way To Backup Your Writing

• CraftThis Week In Writing, we take a hard lesson from the latest Twitter/X hijinks. Plus, we look at what “human writing” means.

AI Is Now Everywhere

• AIThis Week In Writing, we talk about Google’s new AI plan, what it means for writers, and why resistance is futile.

Another Platform Collapses

• Social MediaThis Week In Writing, we talk about Reddit and what it means for centralized communities moving forward.

ChatGPT, the Writer’s Strike, and the Future of Content Writing

• AIThis Week In Writing, we explore a middle-of-the-road approach to ChatGPT and the future of writing

BlueSky, Mastodon, and Notes; Oh, My!

• Social MediaThis Week In Writing, we talk about all the “Twitter Alternatives” and what makes the most sense for writers.

We Have to Talk About Platform Proliferation

• Social MediaThis Week In Writing, we ask why no platform is content on doing one thing well and instead want to do all things poorly.

We Have to Talk About Substack

• Featured PublishingThis Week In Writing, we talk about Diffusion of Innovation Theory and dying platforms.

The Era of Centralized Platforms Is Over

• Featured PublishingThis Week In Writing, we discuss whether you should still own a website if you publish on Medium or Substack.

Introducing My Writing Community!

• EditorialA new way to connect with writers, discuss your interests, and receive feedback on your creative endeavors.

Use Better Words to Be More Inclusive

• CraftThis Week In Writing, we talk about words to avoid in 2023, a special offer from a friend, and Medium joining Mastodon

I Created a New Language in 5th Grade

• LifeThis Week In Writing, we explore our digital legacies, discuss permanence, and close out the year with something new.

Would You Burn Your Entire Archive

• CraftThis Week In Writing, we contemplate throwing out our leftovers and slimming down our digital presence.

The Day Twitter Died

• Social MediaWe’ll be singing, “Bye-bye, Miss American Pie. Drove my Tesla to the office, but there was just one guy.”

This Just in: Will Twitter Verification Save Twitter

• Social MediaElon Musk wants everyone to pay $8/month for Twitter verification, but will that save the platform or alienate people?

The Fate of The Seven Kingdoms

• Social MediaThe future on social media is much like the Game of Thrones. Right now, the only thing missing is a dragon.

Write Now is My Tribe of Mentors

• CraftWhat I learned from Tim Ferriss’ Tribe of Mentors and my answers to his 11 great questions.

Spring Into the Best Twitter Client You’ve Never Heard Of

• Social MediaHow does the Spring Twitter client by Junyu Kuang stack up to Tweetbot and Twitterrific?

How I Edit and Manage The Writing Cooperative

• EditorialWhat writers and editors can learn from my experience editing The Writing Cooperative, one of Medium’s top publications

You Should Be on Twitter

• Social MediaThis Week In Writing, we explore how I’m shocked how many writers don’t take advantage of Twitter’s potential for writers.

How To Disconnect From The Internet Without Going Broke

• Social MediaWe can’t hand our social media accounts to a pricey team as celebrities do—but there are actionable steps we can take toward a healthier relationship with media.

Beware the Ides of March?

• CraftThis Week In Writing, we reclaim the Ides of March and turn it into a day to celebrate and lift writers worldwide.

Is Revue Too Good to be True?

• FreelancingRevue is a newsletter tool that is deeply integrated with Twitter, but is it the right email marketing tool for freelancers?

Let’s Talk About Follower Counts

• Social MediaDo you know how many of your followers are fake? Chances are, it’s a lot more than you think. As a result, following numbers are useless.

How To Make Social Media Great Again

• Social MediaIf you have 30 minutes, you have everything necessary to enjoy social media

Twitter To The Rescue

• Social Media📝 This Week’s Goal: Learn how to leverage Twitter to market your writing and build your audience.

It’s Time to Verify the Internet

• Social MediaTwitter’s new Birdwatch feature is a good step, but more needs to be done.

How To Be A Successful Freelance Writer

• FreelancingEvery choice brings you closer to success. Or does it?

So, You’re New to Medium…

• PublishingAre you new to Medium? Do you want to make the most of your new account and start writing and making money? This is the guide you need.

Stop Choosing Sides on AI

• Featured AIThis Just In: Holding two conflicting ideas about AI isn’t weakness. It's the only honest position.

AI Is Not an All or Nothing Choice

• Featured AIThis Just In: AI use isn't a moral binary. There's a practical middle path for writers.

Unchecked Writing

• AIThis Just In: I stopped using Grammarly; have you noticed? Plus, a deeper exploration into AI writing and my friend the em dash.

Want to Write a Novel in November?

• CraftThis Just In: NaNoWriMo may be dead, but writers have two new options to help hit those writing goals.

AI Exposes the Deeper Rifts in the Writing Industry

• AIThis Just In: Monetization turns passions into sweatshops and AI is making it worse.

AI Killed NaNoWriMo

• AIThis Just In: The writing month challenge may be dead, but there’s a new option to keep writers going.

A Few More Thoughts on Copyright

• AIThis Just In: The history of copyright might be fraught, but it exposes a bigger issue when creating online.

Copyright in the Age of AI

• AIThis Just In: What does copyright do and does it even matter anymore?

Is Reading Dying

• CraftThis Just In: AI summaries and the pivot to video are bad news for the written word.

Are Apple’s Writing Tools the Right Stuff

• AIThis Just In: Apple Intelligence offers the boring version of AI I’ve hoped for, but is it helpful for writers?

Hitting the Reset Button

• PublishingLLM scraping is a virus eating up the internet, but I’m done fighting. Instead, I choose open access and human connection.

Is Generative AI Destroying the Open Web

• AISubscription walls prevent AI scraping, but at what cost? I’m rethinking my whole publishing strategy.

Is Apple Intelligence the AI for the Rest of Us

• AIThis Just In: Apple’s forthcoming entry into AI promises a private, personalized AI, but will it increase AI slop?

Generative AI in Creativity

• AIThe reader survey results have some interesting things to say about generative AI and creativity. Here’s why that’s a problem.

What Is Your Freelance Writing Rate

• FreelancingWriting jobs are evaporating for many reasons, but freelance rates were really bad long before AI came around.

Can We Find a Balance With AI?

• AIThe dichotomy of AI continues to baffle me as I see the good and the bad. Where do we draw the line, and how do we learn to live with this technology?

Don’t Feed the AI Beast

• AIThis Just In: Justin’s writing requires a subscription to prevent AI abuse; consider your own precautions.

An Update on Spam Submissions

• EditorialThis Week In Writing, we talk about spam submissions to The Writing Cooperative and look at some of your thoughts on being called AI.

Would You Want to Know if I Thought Your Writing Sounded Like AI

• EditorialThis Week In Writing, we talk about submissions to The Writing Cooperative and how to avoid false accusations.

Saving Frequently Isn’t The Only Way To Backup Your Writing

• CraftThis Week In Writing, we take a hard lesson from the latest Twitter/X hijinks. Plus, we look at what “human writing” means.

MIT Says ChatGPT Improves Bad Writing, But At What Cost?

• AIThis Week In Writing, we explore how ChatGPT and Grammarly are making us all sound the same.

AI Is Now Everywhere

• AIThis Week In Writing, we talk about Google’s new AI plan, what it means for writers, and why resistance is futile.

The Problem With Creative Entitlement

• AIThis Week In Writing, we explore how AI tools amplify the sometimes problematic relationship between creator and consumer

ChatGPT, the Writer’s Strike, and the Future of Content Writing

• AIThis Week In Writing, we explore a middle-of-the-road approach to ChatGPT and the future of writing

How I Use Midjourney to Create Featured Images for Articles

• AIGenerating unique and interesting featured images, you only need a Discord account and a little patience. Here’s how I use the tool.

AI Is Coming for Content Creators

• AIThis Week In Writing, we look at how AI is changing the content landscape and why that might be a good thing.

What Biases Do You Bring to Your Projects

• CraftThis Week In Writing, we explore biases in our creative pursuits and how those biases can translate to AI-generated content.

Success Comes to Those Who Work for It (Usually)

• CraftThis Week In Writing, we talk about success and perseverance through the lens of Simu Liu’s memoir. Oh, and AI writing, too.

Give Thanks to Our AI Overlords

• AIThis Week In Writing, we celebrate Thanksgiving and dive into the ever-improving AI-generated content.

This Just In: the Robots Are Coming for You

• AIThis Week In Writing, we take a quick break from our regularly scheduled programming to encourage you to vote.

Are Robots Taking Your Job?

• AI📝 This Week’s Goal: Focus on your own writing instead of the robot uprising

Creative Burnout and Why I’m Pausing The Writing Cooperative After 12 Years

• Featured EditorialAlysa Liu's story is relatable and the timing is impeccable.

What Bad Bunny Gets That NBC Doesn’t

• CultureThis Just In: NBC hosted the Olympics, the Super Bowl, and Bad Bunny’s halftime show on the same night, so why was their messaging so poor?

AI Is Not an All or Nothing Choice

• Featured AIThis Just In: AI use isn't a moral binary. There's a practical middle path for writers.

It’s the End of the Year as We Know It (and I Feel Tired)

• LifeThis Just In: It’s time to look back at the year that was and set up some hopes and dreams for the year to come, or something like that.

Unchecked Writing

• AIThis Just In: I stopped using Grammarly; have you noticed? Plus, a deeper exploration into AI writing and my friend the em dash.

The Dream of EPCOT

• LifeThis Just In: Walt Disney’s community of tomorrow is a celebration of humanity and a prototype for how we should live. Maybe we should listen.

It’s Not All About the Benjamins

• PublishingThis Just In: Yet one more thing that Diddy was wrong about.

The Internet Was Doomed From the Start

• Featured PublishingThis Just In: Maybe it’s time to rethink the entire internet.

Want to Write a Novel in November?

• CraftThis Just In: NaNoWriMo may be dead, but writers have two new options to help hit those writing goals.

Answers to a Few Questions

• CraftThis Just In: There were fewer questions than I anticipated, but I will answer them nonetheless.

What Questions Do You Have

• CraftThis Just In: I won’t be participating in Medium Day this year, but I still want to keep the spirit alive. Ask me anything.

What I Did Different With This Book

• PublishingThis Just In: Launching a second edition wasn’t as simple as I thought it’d be, and I learned some lessons along the way.

Introducing Write Now’s Revised Second Edition!

• Featured PublishingThis Just In: You can now access everything I’ve learned writing online over the last two-plus decades. Are you ready for it?

Can We Talk About Comments?

• PublishingThis Just In: Hearing from readers is a lot of fun until you start to get spammed with bots and AI nonsense farming for attention.

Let’s Talk About Tools

• TechThis Just In: There’s no single tool that can do everything and it’s extremely frustrating.

Battle of the Book Builders

• TechThis Just In: I tried to format my book using Vellum and Atticus. Instead, I learned something about app design and limitations.

Does My Journal Need a Backup

• TechThis Just In: I took a lot of your suggestions to heart and gave Obsidian a try. What I found was a bigger question.

Journals Aren’t Forever

• TechThis Just In: After over 13 years, I’ve deleted the Day One journal app. Here’s what it helped me realize about software subscriptions.

This One Has No Direction

• BurnoutThis Just In: Tried, drained, and a little burnt out isn’t exactly the best time to focus on your writing, but it’s why you do it anyway.

AI Exposes the Deeper Rifts in the Writing Industry

• AIThis Just In: Monetization turns passions into sweatshops and AI is making it worse.

The Cost of Rebellion

• Featured Social MediaThis Just In: Rebellions are built on hope, but they require individual sacrifices for collective improvement.

Abuse of Power Comes for Nonprofits

• LifeThis Just In: Wikipedia’s 501(c)(3) tax exemption is threatened, but not by the IRS.

How to Move to Ghost In 2025

• PublishingThis Just In: Own your own publication by launching a website running Ghost. It’s not as difficult as it sounds.

AI Killed NaNoWriMo

• AIThis Just In: The writing month challenge may be dead, but there’s a new option to keep writers going.

The Age of Reaction

• Social MediaThis Just In: We’ve fallen into a dramascroll trap that will be very difficult to climb out of, but it isn’t impossible.

A Few More Thoughts on Copyright

• AIThis Just In: The history of copyright might be fraught, but it exposes a bigger issue when creating online.

Copyright in the Age of AI

• AIThis Just In: What does copyright do and does it even matter anymore?

Tapestry Is Weaving the Future Web

• TechThis Just In: The Iconfactory’s smash new app is a return to the web’s roots and where we all need to head.

The Cost of Simplification

• PublishingThis Just In: Owning your own platform can be complicated and sometimes simplifying can be costly.

A Bit About Me

• Featured This Just InThis Just In: I answer interview questions that cover my views on writing and more.

The Perils of Personal Platforms

• PublishingWhat does it actually mean to leave the world of commercial platforms behind?

Update Those Mute Filters

• Social MediaThis Just In: Let’s collectively scream into the infinite abyss, find ourselves, and make the world better.

It’s Time to Rebel from Mass Market Social Media

• Featured Social MediaThis Just In: IT is the villain in Silo. We should learn from those in the Down Deep and rise up.

The Forthcoming First Amendment Fight

• CraftThis Just In: So-called defenders of free speech are taking office, and we’re all in trouble. Plus, more predictions for 2025.

What Happens When Everything is Paywalled

• PublishingThis Just In: Wealth is becoming a determining factor in the type of World Wide Web you can access. And I’m not talking about speed.

Platforms Are Getting Much Worse

• PublishingThis Just In: Platforms want us to know exactly who controls the internet. It’s not us, but it can be!

Is Reading Dying

• CraftThis Just In: AI summaries and the pivot to video are bad news for the written word.

Empire Strikes Back Isn’t the End of the Series

• Featured LifeThis Just In: Last week sucked, but there is always hope.

Are Apple’s Writing Tools the Right Stuff

• AIThis Just In: Apple Intelligence offers the boring version of AI I’ve hoped for, but is it helpful for writers?

This One’s for the Fans

• CultureThis Just In: Jimmy Buffet gets the due he deserves and shows what creative passion is all about.

When Creating Stops Being Fun

• CraftThis Just In: knowing when (and how) to hit delete is important for every creator’s sanity.

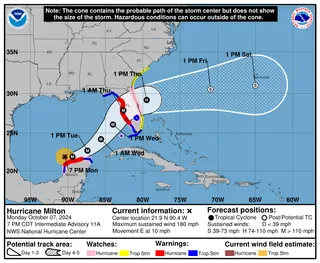

We Shouldn’t Have Taken Milton’s Stapler...

• LifeThis Just In: Hurricane Milton is becoming a real problem, and I’m exhausted.

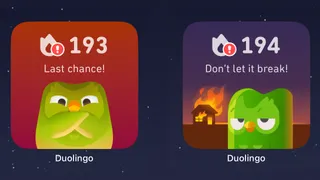

When Gamification Goes Awry

• TechWriting days, health rings, Duolingo… there are more streaks than time.

New Phone Who Dis

• TechNew technology fuels a desire to create but can also be overwhelming and lead to unmet expectations.

Hitting the Reset Button

• PublishingLLM scraping is a virus eating up the internet, but I’m done fighting. Instead, I choose open access and human connection.

Advice for Medium Writers Choose Publications Wisely

• PublishingJust because you CAN submit to a specific publication doesn’t mean you SHOULD.

Medium Day 2024: Questions I Didn't Have Time to Answer

• PublishingA collection of all the questions I didn’t have time for during my 30-minute Medium Day presentation.

Is Generative AI Destroying the Open Web

• AISubscription walls prevent AI scraping, but at what cost? I’m rethinking my whole publishing strategy.

Our Words Are Our Legacy

• CraftCreativity is a clash between individualism and our connection to history.

Fandom Is Being Ruined by "Fans"

• Featured CultureHow review-bombing and constant, unfounded criticism takes agency away from creators

The Downside of Personal Platforms

• PublishingCreators need to think carefully about their personal sites and build in a way that prevents link rot.

Is Apple Intelligence the AI for the Rest of Us

• AIThis Just In: Apple’s forthcoming entry into AI promises a private, personalized AI, but will it increase AI slop?

Maybe I’m Bad at Social Media

• Social MediaSocial media “growth” requires giving in to quantity over quality. I don’t play that game.

Share, But Don’t Spoil

• PublishingA more personal internet relies on user recommendations but doesn’t spoil their experience.

Let’s Talk About Streaking

• BurnoutThis Just In: I’ve racked up a 56-day streak, but not in writing. Plus, I talk about Eurovision.

Chase Your Dreams and See What Happens

• LifeThis Just In: Mental health is a massive part of confidence and success. Dreams are inspiration. Use them.

Generative AI in Creativity

• AIThe reader survey results have some interesting things to say about generative AI and creativity. Here’s why that’s a problem.

What Is Your Freelance Writing Rate

• FreelancingWriting jobs are evaporating for many reasons, but freelance rates were really bad long before AI came around.

Why Criticize When You Can Celebrate?

• Featured CraftThe attention economy destroyed our ability to dream for the sake of page views. It’s time we refocus our attention.

Can We Find a Balance With AI?

• AIThe dichotomy of AI continues to baffle me as I see the good and the bad. Where do we draw the line, and how do we learn to live with this technology?

Where Have All the Cowboys Gone

• Social MediaThis Just In: social media is bleeding users, but where are they going?

Write Like Taylor Swift

• CultureEmbrace life’s many eras and stop trying to be a one-dimensional writer.

Metrics Don’t Matter

• CraftHave we become so accustomed to seeing metrics everywhere that they no longer mean anything?

Celebrating a Decade on Medium

• Featured PublishingLooking back at the past ten years of writing on Medium and what comes next.

Creation and Destruction Are Connected

• CraftThis Just In: The act of creating something is more important than the act of publishing what is made.

Don’t Take My Word for It

• CraftThis Just In: Personalized recommendations are the new algorithms and the best way to build a true audience.

Don’t Feed the AI Beast

• AIThis Just In: Justin’s writing requires a subscription to prevent AI abuse; consider your own precautions.

Sending Emails Is Hard

• PublishingThis Just In: Google and Yahoo crack down on bad behavior; set your DKIM, DMARC, and SPF records now.

Why Is Branding So Difficult?

• PublishingThis Just In: This Week In Writing rebrands; still explores the world with creativity and curiosity.

Why Make Anything if You Don’t Think It Will Be Great?

• CraftThis Week In Writing, we discuss greatness and how chasing it is a possible and noble goal.

Pay People Not Platforms

• PublishingThis Week In Writing, we look at why Substack’s collapse is actually a good thing for paid newsletters.

Let's Make the Internet Personal Again

• Featured PublishingThis Week In Writing, we look at the once-in-a-generation opportunity to create a new internet filled with fun and originality.

Raising the Bar at the Writing Cooperative

• EditorialThis Week In Writing, we look at changes to our publication standards and what they mean for you.

Advent, Waiting, and the Year of Transitions

• LifeThis Week In Writing, we look back at the year that was and determine what it means for the year to come.

Refilling the Creativity Tank

• LifeThis Week In Writing, we discuss what happens when creativity finds other outlets.

Celebrate Giving Tuesday

• LifeThis Week In Writing, we take a quick break from our regularly scheduled programming to celebrate nonprofit organizations.

It’s Time We Discuss Medium

• PublishingThis Week In Writing, we address the platform that has supported my writing for nearly a decade.

My First Year on Mastodon and the Future of Social Media

• Social MediaThis Week In Writing, we look back at how social media fractured and why it’s a good thing for us all.

The Economics of a Self-Hosted Newsletter

• PublishingThis Week In Writing, we talk about what happens when you eliminate platforms and go after it on your own.

Trick or Treat?

• CraftThis Week In Writing, we talk about pen names and whether they make sense for writers.

A New Era Begins

• PublishingThis Week In Writing, we explore the internet’s current metamorphosis and how you can be part of the revolution.

My History of Blogging

• PublishingThis Week In Writing, we celebrate the blog, explore the pendulum of online writing, and double down on quality.

An Update on Spam Submissions

• EditorialThis Week In Writing, we talk about spam submissions to The Writing Cooperative and look at some of your thoughts on being called AI.

Would You Want to Know if I Thought Your Writing Sounded Like AI

• EditorialThis Week In Writing, we talk about submissions to The Writing Cooperative and how to avoid false accusations.

How I Feel About Engagement Numbers

• PublishingThis Week In Writing, we discuss what engagement means and if I get discouraged by a perceived lack thereof. Plus, a look at the future (again).

My Writing Is About Building Community

• PublishingThis Week In Writing, we highlight some of the people I’ve met writing online and answer some of your questions.

It’s Time for a Fresh Start

• PublishingThis Week In Writing, we talk about new Apple products, home renovations, and changes to the newsletter.

Choose Your Own Design

• PublishingThis Week In Writing, we explore the wonderful world of blogs, where writers truly get creative.

Expanding Universes Make Better Stories

• CultureThis Week In Writing, we look at how worldbuilding is an essential part of epic storytelling.

Your Questions Answered

• EditorialThis Week In Writing, we recap a successful Medium Day and address some of the questions I didn’t have time to answer.

Saving Frequently Isn’t The Only Way To Backup Your Writing

• CraftThis Week In Writing, we take a hard lesson from the latest Twitter/X hijinks. Plus, we look at what “human writing” means.

MIT Says ChatGPT Improves Bad Writing, But At What Cost?

• AIThis Week In Writing, we explore how ChatGPT and Grammarly are making us all sound the same.

Do CTAs Even Work Anymore?

• PublishingThis Week In Writing, we explore the “necessary evil” of calls to action and ask if they are any better than tacky banner ads.

AI Is Now Everywhere

• AIThis Week In Writing, we talk about Google’s new AI plan, what it means for writers, and why resistance is futile.

My Ghostly Strategy: Avoid the Graveyard

• PublishingThis Week In Writing, we fully explore how I’m building Ghost into a self-hosted content hub and how you can too.

Another Platform Collapses

• Social MediaThis Week In Writing, we talk about Reddit and what it means for centralized communities moving forward.

Creative Burnout and Why I’m Pausing The Writing Cooperative After 12 Years

• Featured EditorialAlysa Liu's story is relatable and the timing is impeccable.

What Bad Bunny Gets That NBC Doesn’t

• CultureThis Just In: NBC hosted the Olympics, the Super Bowl, and Bad Bunny’s halftime show on the same night, so why was their messaging so poor?

AI Is Not an All or Nothing Choice

• Featured AIThis Just In: AI use isn't a moral binary. There's a practical middle path for writers.

It’s the End of the Year as We Know It (and I Feel Tired)

• LifeThis Just In: It’s time to look back at the year that was and set up some hopes and dreams for the year to come, or something like that.

Unchecked Writing

• AIThis Just In: I stopped using Grammarly; have you noticed? Plus, a deeper exploration into AI writing and my friend the em dash.

It’s Not All About the Benjamins

• PublishingThis Just In: Yet one more thing that Diddy was wrong about.

Want to Write a Novel in November?

• CraftThis Just In: NaNoWriMo may be dead, but writers have two new options to help hit those writing goals.

Answers to a Few Questions

• CraftThis Just In: There were fewer questions than I anticipated, but I will answer them nonetheless.

What Questions Do You Have

• CraftThis Just In: I won’t be participating in Medium Day this year, but I still want to keep the spirit alive. Ask me anything.

What I Did Different With This Book

• PublishingThis Just In: Launching a second edition wasn’t as simple as I thought it’d be, and I learned some lessons along the way.

Introducing Write Now’s Revised Second Edition!

• Featured PublishingThis Just In: You can now access everything I’ve learned writing online over the last two-plus decades. Are you ready for it?

Let’s Talk About Tools

• TechThis Just In: There’s no single tool that can do everything and it’s extremely frustrating.

Battle of the Book Builders

• TechThis Just In: I tried to format my book using Vellum and Atticus. Instead, I learned something about app design and limitations.

Does My Journal Need a Backup

• TechThis Just In: I took a lot of your suggestions to heart and gave Obsidian a try. What I found was a bigger question.

Journals Aren’t Forever

• TechThis Just In: After over 13 years, I’ve deleted the Day One journal app. Here’s what it helped me realize about software subscriptions.

AI Exposes the Deeper Rifts in the Writing Industry

• AIThis Just In: Monetization turns passions into sweatshops and AI is making it worse.

AI Killed NaNoWriMo

• AIThis Just In: The writing month challenge may be dead, but there’s a new option to keep writers going.

A Few More Thoughts on Copyright

• AIThis Just In: The history of copyright might be fraught, but it exposes a bigger issue when creating online.

Copyright in the Age of AI

• AIThis Just In: What does copyright do and does it even matter anymore?

The Forthcoming First Amendment Fight

• CraftThis Just In: So-called defenders of free speech are taking office, and we’re all in trouble. Plus, more predictions for 2025.

Is Reading Dying

• CraftThis Just In: AI summaries and the pivot to video are bad news for the written word.

Are Apple’s Writing Tools the Right Stuff

• AIThis Just In: Apple Intelligence offers the boring version of AI I’ve hoped for, but is it helpful for writers?

This One’s for the Fans

• CultureThis Just In: Jimmy Buffet gets the due he deserves and shows what creative passion is all about.

When Creating Stops Being Fun

• CraftThis Just In: knowing when (and how) to hit delete is important for every creator’s sanity.

When Gamification Goes Awry

• TechWriting days, health rings, Duolingo… there are more streaks than time.

Medium Day 2024: Questions I Didn't Have Time to Answer

• PublishingA collection of all the questions I didn’t have time for during my 30-minute Medium Day presentation.

Our Words Are Our Legacy

• CraftCreativity is a clash between individualism and our connection to history.

Fandom Is Being Ruined by "Fans"

• Featured CultureHow review-bombing and constant, unfounded criticism takes agency away from creators

Maybe I’m Bad at Social Media

• Social MediaSocial media “growth” requires giving in to quantity over quality. I don’t play that game.

Chase Your Dreams and See What Happens

• LifeThis Just In: Mental health is a massive part of confidence and success. Dreams are inspiration. Use them.

Generative AI in Creativity

• AIThe reader survey results have some interesting things to say about generative AI and creativity. Here’s why that’s a problem.

Why Criticize When You Can Celebrate?

• Featured CraftThe attention economy destroyed our ability to dream for the sake of page views. It’s time we refocus our attention.

Write Like Taylor Swift

• CultureEmbrace life’s many eras and stop trying to be a one-dimensional writer.

Metrics Don’t Matter

• CraftHave we become so accustomed to seeing metrics everywhere that they no longer mean anything?

Celebrating a Decade on Medium

• Featured PublishingLooking back at the past ten years of writing on Medium and what comes next.

Creation and Destruction Are Connected

• CraftThis Just In: The act of creating something is more important than the act of publishing what is made.

Don’t Take My Word for It

• CraftThis Just In: Personalized recommendations are the new algorithms and the best way to build a true audience.

Why Is Branding So Difficult?

• PublishingThis Just In: This Week In Writing rebrands; still explores the world with creativity and curiosity.

Why Make Anything if You Don’t Think It Will Be Great?

• CraftThis Week In Writing, we discuss greatness and how chasing it is a possible and noble goal.

Let's Make the Internet Personal Again

• Featured PublishingThis Week In Writing, we look at the once-in-a-generation opportunity to create a new internet filled with fun and originality.

Advent, Waiting, and the Year of Transitions

• LifeThis Week In Writing, we look back at the year that was and determine what it means for the year to come.

Refilling the Creativity Tank

• LifeThis Week In Writing, we discuss what happens when creativity finds other outlets.

Trick or Treat?

• CraftThis Week In Writing, we talk about pen names and whether they make sense for writers.

A New Era Begins

• PublishingThis Week In Writing, we explore the internet’s current metamorphosis and how you can be part of the revolution.

My History of Blogging

• PublishingThis Week In Writing, we celebrate the blog, explore the pendulum of online writing, and double down on quality.

How I Feel About Engagement Numbers

• PublishingThis Week In Writing, we discuss what engagement means and if I get discouraged by a perceived lack thereof. Plus, a look at the future (again).

My Writing Is About Building Community

• PublishingThis Week In Writing, we highlight some of the people I’ve met writing online and answer some of your questions.

It’s Time for a Fresh Start

• PublishingThis Week In Writing, we talk about new Apple products, home renovations, and changes to the newsletter.

Choose Your Own Design

• PublishingThis Week In Writing, we explore the wonderful world of blogs, where writers truly get creative.

Expanding Universes Make Better Stories

• CultureThis Week In Writing, we look at how worldbuilding is an essential part of epic storytelling.

Your Questions Answered

• EditorialThis Week In Writing, we recap a successful Medium Day and address some of the questions I didn’t have time to answer.

Saving Frequently Isn’t The Only Way To Backup Your Writing

• CraftThis Week In Writing, we take a hard lesson from the latest Twitter/X hijinks. Plus, we look at what “human writing” means.

MIT Says ChatGPT Improves Bad Writing, But At What Cost?

• AIThis Week In Writing, we explore how ChatGPT and Grammarly are making us all sound the same.

Do CTAs Even Work Anymore?

• PublishingThis Week In Writing, we explore the “necessary evil” of calls to action and ask if they are any better than tacky banner ads.

My Ghostly Strategy: Avoid the Graveyard

• PublishingThis Week In Writing, we fully explore how I’m building Ghost into a self-hosted content hub and how you can too.

This Just in Comes Home

• PublishingWelcome to the first issue of This Just In completely managed from my website!

How Do You End Things Well

• CultureSuccession and Ted Lasso ended last week. Both had a distinct impact on culture and were met with intense anticipation despite relatively small audiences. Don't worry, there aren't any real spoilers in this article. I enjoyed both endings for different reasons. Succession brought a sense of

My Return to Journaling Failed Miserably

• LifeThis Week In Writing, we talk about good intentions, rumored Apple products, and buying domain names

Let's Talk About Numbers

• PublishingThis Week In Writing, we talk about the importance of metrics and why I barely pay attention to mine.

ChatGPT, the Writer’s Strike, and the Future of Content Writing

• AIThis Week In Writing, we explore a middle-of-the-road approach to ChatGPT and the future of writing

BlueSky, Mastodon, and Notes; Oh, My!

• Social MediaThis Week In Writing, we talk about all the “Twitter Alternatives” and what makes the most sense for writers.

On Tennis and Writing Breaks

• LifeThis Week In Writing, I discuss my prolonged break from daily writing and follow up on last week’s Substack article.

Stop Creating Quantity and Start Creating Quality

• EditorialThis Week In Writing, we discuss Medium’s new Boost program and why the vast majority of submissions lately have been atrocious.

How I Use Midjourney to Create Featured Images for Articles

• AIGenerating unique and interesting featured images, you only need a Discord account and a little patience. Here’s how I use the tool.

You Have Questions, I May Have Answers

• CraftThis Week In Writing, we celebrate International Question Day by listening to Selena Gomez. What does that have in common? Keep reading!

AI Is Coming for Content Creators

• AIThis Week In Writing, we look at how AI is changing the content landscape and why that might be a good thing.

The Era of Centralized Platforms Is Over

• Featured PublishingThis Week In Writing, we discuss whether you should still own a website if you publish on Medium or Substack.

How Will History Remember Your Writing?

• CraftThis Week In Writing, we talk about the magic found in old books

How I Come Up With Writing Topics

• CultureThis Week In Writing, we explore topic generation while celebrating the best damn band in the land!

Introducing My Writing Community!

• EditorialA new way to connect with writers, discuss your interests, and receive feedback on your creative endeavors.

Are You Begging for Eyes in the Attention Economy

• Featured PublishingThis Week In Writing, we explore the internet’s move away from the attention economy and how writers can make the web more personal

Use Better Words to Be More Inclusive

• CraftThis Week In Writing, we talk about words to avoid in 2023, a special offer from a friend, and Medium joining Mastodon

What Biases Do You Bring to Your Projects

• CraftThis Week In Writing, we explore biases in our creative pursuits and how those biases can translate to AI-generated content.

Welcome to 2023. Now Take A Nap.

• CraftThis Week In Writing, we kick off a new year with a chat about goals, self-care, and naps.

I Created a New Language in 5th Grade

• LifeThis Week In Writing, we explore our digital legacies, discuss permanence, and close out the year with something new.

What’s the Last Book You Read

• Crafthttps://writingcooperative.com/whats-the-last-book-you-read-5265b44e180e

Success Comes to Those Who Work for It (Usually)

• CraftThis Week In Writing, we talk about success and perseverance through the lens of Simu Liu’s memoir. Oh, and AI writing, too.

Would You Burn Your Entire Archive

• CraftThis Week In Writing, we contemplate throwing out our leftovers and slimming down our digital presence.

Give Thanks to Our AI Overlords

• AIThis Week In Writing, we celebrate Thanksgiving and dive into the ever-improving AI-generated content.

Do You Procrastawrite

• CraftThis Week In Writing, we talk about procrastination and everything we do instead of writing.

Let’s Talk About Money

• FreelancingThis Week In Writing, we talk about earning money as a writer online and check in on NaNoWriMo.

Happy Author’s Day

• CraftThis Week In Writing, we kick off NaNoWriMo by celebrating all the author’s out there, whether published or not.

You’re Invited

• CraftThis Week In Writing, we prepare for NaNoWriMo with a special invitation, but first, we talk about She-Hulk!

Get Ready for NaNoWriMo

• CraftThis Week In Writing, we prepare for National Novel Writing Month (NaNoWriMo) with encouragement and a special offer.

How Do You Deliver Joy

• CraftThis Week In Writing, we discuss how to find your joy and how to spread joy to others.

Let’s Taco ‘Bout Giving the Reader More

• CraftThis Week In Writing, we celebrate National Taco Day by discussing ways to hook the reader and give them more to chew on.

Stop Making Excuses and Write

• CraftThis Week In Writing, we explore excuses we use to avoid writing and discuss methods to get out of our own way.

Did You Hug Your Boss Today?

• CraftThis Week In Writing, we explore inappropriate workplace dynamics and how that applies to writers.

How Do You Fight Procrastination?

• CraftThis Week In Writing, we explore the bane of most writers’ existence: procrastination. And, yes, it’s different from Writer’s Block.

This Just In: Thank You, Subscribers

• PublishingI don’t know who you are, but I’m grateful for your support, and I hope you enjoy all the things you read.

What Word Makes You Cringe?

• CraftThis Week In Writing, we talk about cringe-worthy words and give a nod to puns, courtesy of Letterkenny.

This Is a Bit Revealing

• CraftThis Week In Writing, I reveal my inner nerd by sharing a personal project. Plus, we look at character creation.

The Stats I Track

• CraftThis Week In Writing, we explore which stats are necessary to track and which are safe to ignore.

Do You Color Outside the Lines?

• CraftThis Week In Writing, we explore taking our writing to places the reader doesn’t expect, like in the film Everything Everywhere All At Once.

Writing Is Exploring The Unknown

• CraftThis Week In Writing, we explore all-or-nothing thinking and learn how to live in the unknown within our work and ourselves.

Write Now is My Tribe of Mentors

• CraftWhat I learned from Tim Ferriss’ Tribe of Mentors and my answers to his 11 great questions.

When Writing Gets Controversial

• CraftThis Week In Writing, we explore the controversial origins of the bikini and how our writing can stoke controversy of its own.